Today saw the return of the second GTC of 2021 and NVIDIA CEO, Jensen Huang, did not disappoint with his notable announcements!

AI inferencing is being deployed to enhance consumer lives with smart, real-time experiences and to gain insights on trillions of IoT sensors and billions of cameras. Increasingly, enterprises are moving deployments to the edge to avoid network latency in real-time applications while managing their cloud costs. With trillions of inferences per day, modern enterprises and cloud service providers (CSPs) need accelerators that can target inference applications at scale across use cases, including edge, retail recommendations, and intelligent video analytics (IVA).

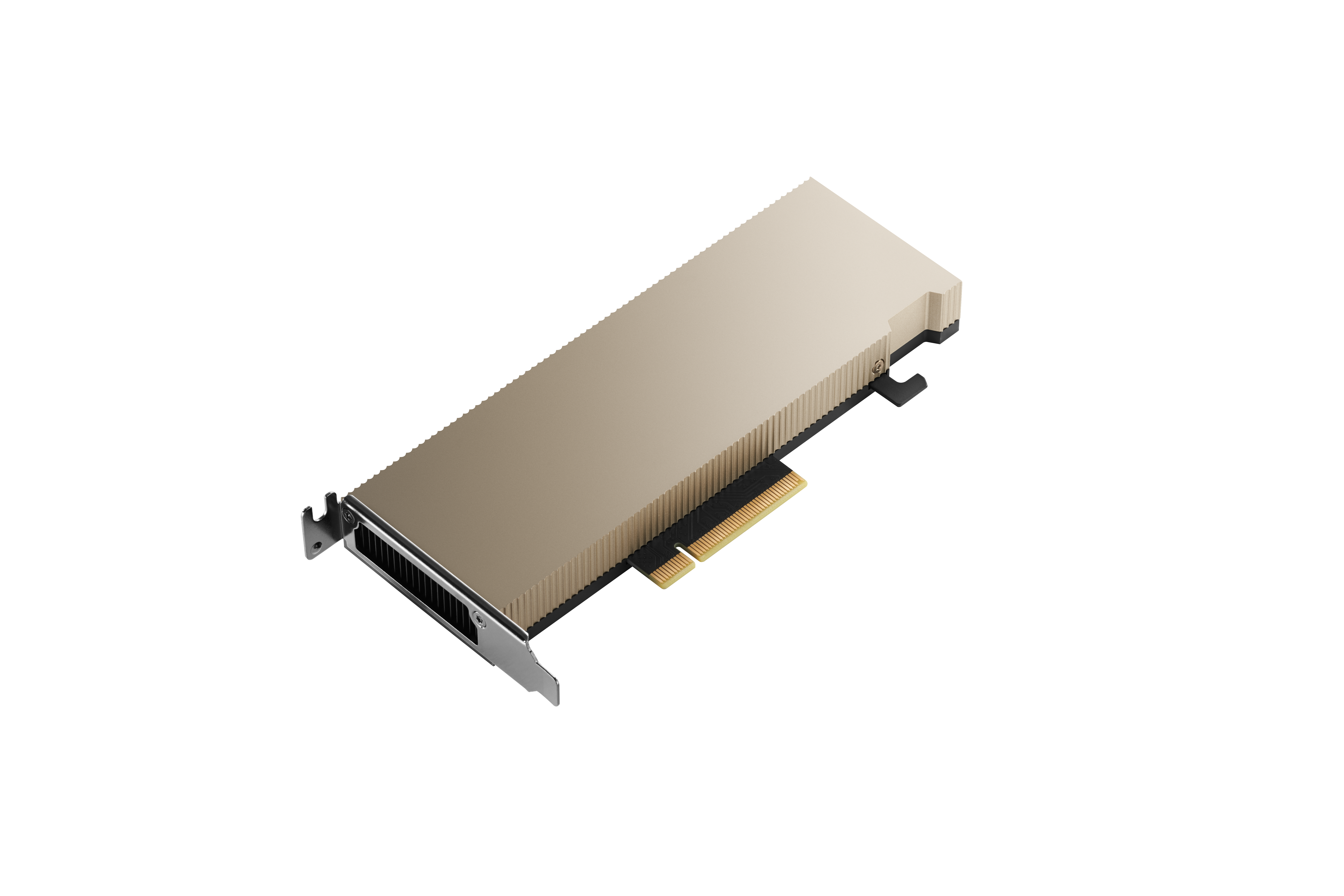

The NVIDIA A2 Tensor Core GPU provides entry-level inference with low power, a small footprint, and high performance for IVA with NVIDIA AI at the edge. Featuring a low-profile PCIe Gen4 card and a low 40–60 watt (W) configurable thermal design power (TDP) capability, the A2 brings versatile inference acceleration to any server for deployment at scale.

A2 Tensor Core GPUs add entry-level inference to the NVIDIA AI portfolio, which also includes A100and A30 Tensor Core GPUs. A100 features the highest inference performance for the most compute-intensive applications, and A30 brings optimal inference performance for mainstream servers. Together, A2, A30, and A100 deliver the most comprehensive AI inference offering across edge, data center, and cloud.

A30X

The A30X combines the NVIDIA A30 Tensor Core GPU with the BlueField-2 DPU. The design of this accelerator provides a good balance of compute and I/O performance for use cases such as 5G vRAN and AI-based cybersecurity. Multiple services can run on the GPU, with the low latency and predictable performance provided by the onboard PCIe switch.

A100X

The A100X brings together the power of the NVIDIA A100 Tensor Core GPU with the BlueField-2 DPU. The A100X is ideal for use cases where the compute demands are more intensive. Examples include 5G with massive MIMO capabilities, AI-on-5G deployments, and specialized workloads such as signal processing and multi-node training.

NVIDIA Quantum-2 InfiniBand Platform

The NVIDIA Quantum-2 InfiniBand platform is an end-to-end network solution that includes high-bandwidth, ultra-low latency adapters, switches and cables, and comprehensive software for delivering the highest data center performance. NVIDIA Quantum gives data centers the blueprint for a robust, secure infrastructure that supports develop-to-deploy implementations across all workloads and storage requirements. It is built for scale, easy to configure and automate, decreases deployment time, and improves time to value.