Latency & Bandwidth

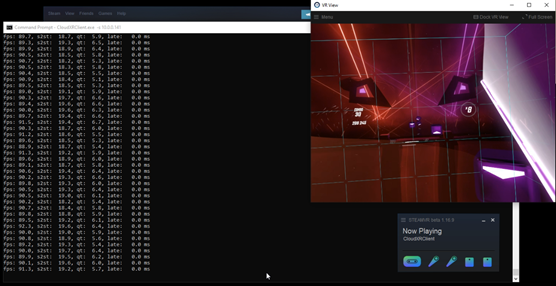

Firstly, a latency test was completed using Beat Saber as a prime example of where response time is critical. Beat Saber is a VR rhythm-based game which requires users to 'slash' notes coming towards them in time with the selected song. This is a great test as latency means not only the desync of the representation of the notes onscreen can drift from the audio of the song, but also it can affect reaction times from the player being recorded on the server side. If the slash is recorded too late, the player will lose their streak and likely get very frustrated with the game.

CloudXR sessions were compared to direct experiences from tethered VR from the VIVE Pro and the Oculus Quest 2 all in one. In this case, a mobile experience via the One Plus 7T Pro was briefly tested, however there is sadly no motion controller support here. It can be implemented using Bluetooth controllers, but today using the ready-to-go package provided by NVIDIA that is not yet supported. This means the game is not fully playable, but we can still get an idea of visual performance.

Figure 1 - Latency test using Beat Saber with a Tethered HTC VIVE

For this test, we also used a WiGig adapter for the tethered headset to see if this made any difference to recorded latency or playability.

We were pleasantly surprised to find out that there was no difference in performance, connectivity and response time when using CloudXR's remote streaming when compared to any of the native experiences. The game is full playable, very enjoyable and provides a generally excellent experience.

Frame rates were excellent and latency was also well within limits and the fact that this was a cloud delivered session was undetectable to the player.

In order to understand the impact of the network bandwidth on CloudXR, a further test was also carried out where the network was forced to operate below the recommended minimum level which is 50Mbps per second to 30Mbps and 10Mbps. The result is that there were lags in the game’s video and audio stream of anywhere between 1 and 3 seconds. This happened very consistently and made Beat Saber quite unplayable. The recommended connection speed for a Cloud-XR session is 60Mbps, and it is also recommended to use a single dedicated WiFi access point. 5G does not suffer the same one-to-one access point recommendation, but it is important to consider network congestion in any remote streaming design.

Graphical Performance

Satisfied with latency, we moved into testing the graphical experience and compared the native devices and CloudXR streaming sessions. To create a level playing field, we found a common application that exists in all three devices native libraries – Epic Roller Coasters. It’s handily available on Steam, the Oculus store and Google Play. We then settled on the same track named Rock Canyon and inspected the visual quality to compare the experiences as delivered natively and then from the same device but delivered remotely via CloudXR and a high-performance GPU and processor.

After our initial rounds of testing using the tethered headset there was no noticeable difference in peformance between a local Stream session and one delivered via CloudXR. This is exactly what we were hoping for as both the tethered VR and the CloudXR systems are using dedicated graphics accelerators to generate high quality 3D visuals. It is also important to note that streaming from one Windows/Stream VR system to another Windows system is possible and we are not limited to streaming to mobile devices. An example of where this can be handy is when using laptops which have limited GPU acceleration. In this case, users who are out on the road can tap into a full tethered headset experience using their laptop or low powered desktop PC but still experience a great experience. It could be a great visual tool for demonstrating new designs to customers of a wide variety of customised products including buildings, cars, kitchens and so on.

NOTE: the below images are larger than the windows.

Figure 2 – HTC Vive Pro with an NVIDIA 1080Ti GPU – native experience

Figure 3 HTC Vive Pro with an RTX 6000 – CloudXR delivered

Figure 4 – Oculus Quest 2 - native experience

Figure 5 – Oculus Quest 2 with a RTX 6000 - Cloud XR Delivered

Compared to the Oculus Quest 2 / all-in-one system’s native experience, it was clear to see Cloud-XR significantly enhanced the graphical experience. Image quality and resolution was greatly improved over the native experience, especially in the water texture.

Figure 6 – OnePlus 7T Pro - Native experience

Figure 7 – OnePlus 7T Pro with RTX 6000 – Cloud XR Delivered

The biggest leap in visual quality came when adding CloudXR to the mobile native experience of the OnePlus 7T Pro. It's ability to use a dedicated workstation GPU made the mobile GPU pale in comparison - bascially there was no contest.

There are some challenges, however, as it is necessary to have controls for it to work which are not available on mobile devices as standard. NVIDIA recommended in 2.1 documentation to use a VNC client to access the system CloudXR was running on and use the keyboard input to get around this. Obviously, this is not the most practical for just a mobile device but it is possible to do so (we will touch on this a bit later).

Professional Applications

As a professional application we selected Blender as another key target for the CloudXR wireless experience. Blender is a free and open-source graphical toolset for creating 3D objects and animations for a variety of target markets. It worked perfectly and we were able to inspect a scene using the all-in-one Oculus Quest 2 headset and mobile and were able to see in real time a visualisation of a scene we created.

Blender takes full advantage of the features of enterprise grade professional GPU’s and this extension can allow for a better creative flow without being restricted by the tethered headset or lighthouses of a traditional high performance VR workstation.

NVIDIA GRID & Virtualisation

In order to achieve higher densities, we often deploy solutions using both GPU passthrough or VDI and NVIDIA's GPU virtualisation - GRID.

We were delighted to find that this did not hinder performance on passthrough. This was also true for using vGPU profiles; we were able to get up to 2 users on a single Quadro RTX GPU.

Unfortunately, however any more than 2 sessions per GPU and the streaming quality suffers greatly, giving very blocky graphics, although interestingly the latency was unaffected. This is related to the GPU encoding/decoding engines. The majority (if not all) graphical GPUs have a single encoder and 1-2 decoder engines. As of now, GPUs have not been optimised for a high number of simultaneous streams over a network with a multitude of XR devices. For example, consumer cards can only officially support a maximum number of 3 streams without the overhead of XR software. Using XR however also increases the load on the GPU, so when streaming on top of the 3D workload increases utilisation, it is more difficult to support a higher number of active streams. Other factors such as heavy network traffic can also affect stream quality also.

There was another issue we found with all-in-one devices. A wireless access point was required for it to connect and having other traffic on the point degraded the quality of the stream dependant on network traffic. This was much more lenient with mobile devices, having 3 connected at once with no drop in quality.

Mobile Support & Controllers

As mentioned previously we had a little trouble getting controllers to work with the CloudXR mobile client which are essential to certain applications which are originally built for SteamVR. We were able to remote into the system and use a keyboard to allow interaction and enable our testing, but it is not a viable long-term solution. It is possible to use Bluetooth controllers by modifying the SDK provided by NVIDIA but that does require programming knowledge in Android Studio. It would require customising the inputs for CloudXR to understand them (according to NVIDIA developers) but it would fully open up the experience, as long as the software that is being streamed can take advantage of this. At the time of writing, an off-the-shelf special Bluetooth mobile VR controller with 3D tracking (akin to the Oculus or HTC Vive tracking controller) was not available.

Note - All experiences were tested with the CloudXR 2.1 and mobile also tested in 3.1.

Boston Labs Solution - The MU-CXR

CloudXR supports a wide range of compatible GPUs from NVIDIA's Quadro RTX range and the MU-CXR can support the full range. There are few requirements that cannot be satisfied with the flexibility and range of the product, although options can sometimes be overwhelming.

Don't despair however, our experienced team will be there every step of the way, helping you to make the right selection. Equally, our engineers deliver a complete end to end consultation to installation and maintenance service, simplifying your experience and enabling you to focus on the most important piece, your applications, and virtual experiences.

At Boston Labs we have extensively tested a wide range of available servers and solutions to select an optimum building block and standalone solution for CloudXR deployments. The resulting product is the Multi-User CloudXR or MU-CXR for short.

The MU-CXR has been developed, tested, and optimised for CloudXR from the ground up. It’s ready for any XR capable device, be it tethered, all-in-one or even for mobile clients. It can comfortably house 8 enterprise GPUs without sacrificing power or performance and uses the full processing power of up to two AMD’s 7003 series, codename Milan CPU with 64 cores. It has up to 24 S-ATA SSD / HDD slots with 4 being native NVMe capable, and it can have up to 8TB ECC memory - providing a wide range of performance options to suit any application.

Overall, up to 16 CloudXR sessions can be hosted from just one MU-CXR enclosure, enabling great density and ease of management through consolidation.

As a tailor-made product, the MU-CXR can be further expanded and/or customised on demand.

Figure 8 - MU-CXR Flagship Solution

Conclusion

CloudXR has amazing potential to stream full GPU experiences, be it for entertainment or content creation, to almost any location without the need for a complex setup or needing to have a pre-wired "lighthoused" working space. The experience can be viewed on another tethered device, an all-in-one system or a mobile device. By using a Pascal or later NVIDIA GPU and a system which meets the requirements of the software being streamed, CloudXR can be used almost anywhere.

The limitations of CloudXR are that both the server and client require a strong WiFi, 5G or ethernet connection, requires the use of SteamVR to operate and can only be used as a 1-1 ratio per Windows OS. Other limiting factors would be the incompatibility of client devices which require input from software.

Overall, it opens a lot of possibilities to remove tethered restrictions, freeing up when and where XR content can be consumed and on any XR capable device. With the introduction to 5G networks, this can be a huge stepping stone towards XR freedom based anywhere. Until then a server utilised for CloudXR is still a huge step getting towards that goal; bringing the freedom and GPU performance to devices by breaking the walls and unifying the best of everything.

Test drives of Cloud-XR are already available via Boston Labs, our onsite R&D and test facility. The team are ready to enable customers to test-drive the latest technology on-premises or remotely via our fast internet connectivity, however due to the nature of CloudXR with many moving parts and connectivity requirements we recommend a physical demonstration.

If you are ready to start your XR journey, then please get in touch either by email or by calling us on 01727 876100 and one of our experienced sales engineers will happily guide you to your perfect tailored solution and invite you in for a demo.

Resources:

Written by:

Peter Wilsher

Junior Field Application Engineer